CI/CD Vulnerability Scanning - How to begin your DevSecOps journey

DevSecOps is a natural evolution

CI and CD (continuous integration and continuous delivery) encourage shifting to the left to find and fix issues early through committing small changes early and often and verifying them through automation to ensure your main line is always ready to be released.

DevOps (Development and Operations) is a set of practices and cultural philosophies that automate and integrate processes between development and operations. It is a natural evolution of the approaches introduced by CI/CD.

DevSecOps (Development, Security, and Operations) is an approach to culture, automation, and platform design that integrates security as a shared responsibility throughout the development cycle. Not surprisingly, it is just the next step in the evolution around how we develop software.

DevSecOps covers a lot of ground

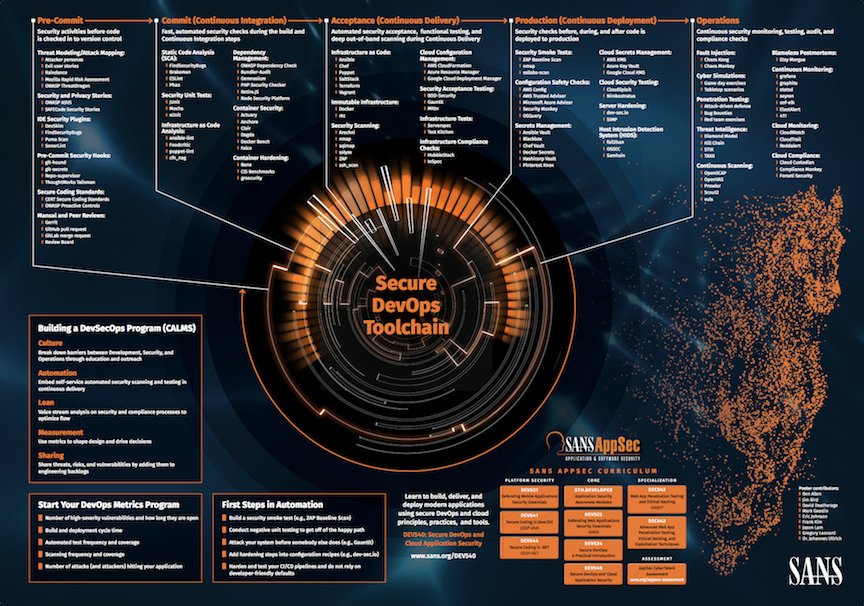

DevSecOps is about more than just scanning for vulnerabilities. Take a look at the image below produced by the SANS Institute.

Source: https://twitter.com/sanscloudsec/status/968590339371126784

You can see that a secure DevOps toolchain covers everything from static application security testing (SAST) to dynamic application security testing(DAST) to security auditing and monitoring, and much much more. DevSecOps can be a bit daunting as there is a lot to think about and a lot of ground to cover.

But from an automation perspective, a great place to start is looking for vulnerabilities in your CI/CD pipelines.

Scanning for vulnerabilities, defects, or weaknesses in your code is an essential part of effective software security. It’s the natural place to start and where you have the most control in an ever-shifting threat landscape.

“U.S Department of Homeland Security research found that 90% of security incidents result from exploits against defects in software.” - DHS Cyber Security

CI/CD pipelines are early in the development process. They are a vital gate for ensuring continuous delivery and don’t generally require a huge investment to get started with, and can be implemented across a multitude of CI servers. If you can run them successfully in a pipeline, the odds are that you can probably integrate them with your build system at some point. Which pretty much means shifting as far left as possible.

Pipelines are also great for evaluating different tools without disturbing existing development processes and developers, at least until you have found suitable tools and technologies.

So, the rest of this blog is mainly written with the automation of vulnerability detection in CI/CD pipelines in mind.

Keep your eyes on the prize

Technology stacks vary quite a bit from organization to organization. It would be impossible to cover every possible stack. But if you are somewhat modern in your approach to software development, then implementing vulnerability scanning in your pipelines for the following areas probably makes sense for you.

Analyzing source code for vulnerabilities

Where else would you start besides secure coding practices? Pull requests/code reviews and coding guidelines are, of course, a massive help in implementing secure coding practices. But developers are human, and mistakes are made.

Additionally, the threat landscape is ever-changing, and what may once have been considered a secure coding practice may not continue to be so. Automating code analysis with a tool or technology that can keep up to date with the ever-changing threat landscape is the more enduring and cohesive approach.

Analyzing 3rd party dependencies for vulnerabilities

Application dependency graphs are deeper than ever, and the use of open-source software has exploded compared to even five years ago.

I read an old (2014) paper from Contrast Security that claimed component downloads doubled from 6 billion to 13 billion within a two-year time frame. That’s breakneck growth but completely believable. I would even say that third-party open source dependencies are ubiquitous in software today.

There have been some pretty well-publicized software supply chain attacks recently. It has become clear that 3rd party dependencies pose significant risks for vulnerabilities and should be part of any vulnerability detection you are doing.

Analyzing container images for vulnerabilities

Have you ever heard the term “software is eating the world”? Sometimes it feels like containers are eating software development. And for a good reason.

The ability to use a standardized packaging format while simultaneously delivering an immutable environment for our software to run on is a huge step forward in many ways. But as with any step forward, there are considerations:

- Vulnerable libraries can be added to an image during build time.

- The way images are built using layers means vulnerabilities can be obfuscated and not easily visible.

- The bottom of an image will have a base image representing an operating system. As for any operating system, it can have vulnerabilities.

- The process a container is running may have escalated privileges it doesn’t need.

The above doesn’t mean you should not use containers but rather scan your images. This enables you to detect vulnerabilities in your software and the environment it will run on when delivering the whole as a single package.

Analyzing infrastructure as code (IAC) for vulnerabilities

As DevOps practices and culture take hold, defining your infrastructure as code is becoming more and more common.

Infrastructure as Code(IaC) is the provisioning, configuration, and management of infrastructure through machine-readable files, e.g., code.

But code is code whether it is for infrastructure or not. And the ability to quickly consume and scale infrastructure resources also means that, with misconfigurations, you can create security issues more quickly and on a larger scale.

According to a Gartner report from 2019, 99% of cloud security failures will be the user's fault. When cloud resources are inherently misconfigured through IaC, they tend to stay that way too. It wouldn't be misconfigured if it was obvious in the code. Also, If they are discovered and fixed manually, they tend to reappear again.

So discovering IaC misconfigurations and security issues is essential and should be a standard part of your vulnerability scanning process.

Sharing some experiences

Based on the experience I have gathered implementing vulnerability scanning in pipelines, I suggest you consider the below factors when getting started with DevSecOps in your CI/CD pipelines.

Think compatibility and ease of use

Find a technology/tool that covers the most ground as possible. If your current stack only consists of node.js and .Net, that doesn’t mean it will always be that limited or static. For example, using `npm audit` or `yarn audit` might get you started quickly but will probably not stand the test of time.

You should, of course, also consider how well a technology/tool integrates into your CI/CD approach. That is the main focus, after all. Ask yourself some questions like:

- Does it return sensible exit codes that fit well with automation?

- How well does it integrate into the many CI servers available today?

- Do I need to implement workarounds or wrappers to get it working well for my pipeline flow?

You get the idea. The broadest possible support for languages and CI servers, along with ease of use, are the primary considerations.

Configurability rules

In my experience, you will, in all likelihood, encounter false positives. Not all security issues are equally crucial for all code bases, and there are bound to be findings that are not relevant.

Therefore, it is essential to be able to define what applies to a given code base through configuration, preferably in a way that can be tracked and audited alongside the codebase. E.g., a configuration file or annotations in the codebase.

The number of issues, especially in legacy applications, can be substantial, and a gradual approach to mitigating them is often needed. In these cases, you need to be able to set thresholds to define the breakage of the pipeline if they are exceeded.

- You should be able to configure a severity level to fail the pipeline if there are critical findings.

- You should be able to configure a threshold number based on a severity level that will break your pipeline. For instance, I have three (3) high severity findings now. Fail the pipeline if the number of high severity findings exceeds this threshold of three (3).

Reporting is crucial

As most developers and DevOps practitioners are not security experts, the output and reporting of the chosen technology or tool should focus on helping them understand the findings, how they are introduced, and what to actually do about them. With that in mind, consider the following:

- A report should provide comprehensive guidance on how/where findings are introduced. E.g., the specific file and line along with the entire dependency chain, if it was introduced by using a specific module and version.

- A report should provide a clear, concise, and understandable description of the finding.

- A report should provide excellent guidance on how to mitigate any findings. E.g., bump version 3 of dependency Y to version 4.

- A report should only provide relevant findings. It should not report findings you have configured as irrelevant to the codebase.

- The technology/tool should generate the report. You should not have to use some other tool to generate the report. It probably won’t live up to your needs.

OWASP is your friend

The Open Web Application Security Project (OWASP) is a nonprofit foundation that works to improve the security of software. The Goal of OWASP is to: "Enable organizations to conceive, develop, acquire, operate and maintain applications that can be trusted."

OWASP provides a load of valuable information on top of projects and tools to help you achieve your security goals. If you are constrained to open source software, I suggest you look at the relevant projects.

If you are developing web applications, then you definitely need to be aware of the OWASP Top Ten, which is essentially a compilation of the top ten most common and critical security risks to web applications. I would even go so far as to say that whatever technology/tool you use should support the OWASP Top Ten.

You must mitigate

Identifying vulnerabilities in your pipeline is a great place to start your DevSecOps journey. But you are not done. You need to do something about them.

Define a mitigation strategy to deal with the vulnerabilities:

- Define mitigation priorities. E.g., critical findings of type X must be fixed immediately.

- Define the process for declaring a vulnerability as irrelevant.

- Introduce vulnerabilities as criteria during code reviews and make sure they are visible during the review process.

- And many other things, depending on your case…

Summing it all up

Using purely open-source tools and technologies is probably going to be fragmented. While these tools and technologies definitely give value, they each have their quirks, and it is not always easy to see what they cover and how well they do it. They also have different output formats, which leads to an inability to get an overall view of your security status.

Implementing vulnerability scanning in your pipelines is a great place to start but is by no means enough in itself. You should use this to prevent new vulnerabilities from getting into your code. But you are only going to find vulnerabilities during the pipeline execution phase. New vulnerabilities may be discovered between pipeline executions leaving your software vulnerable for some time. This is especially true for stable code bases that don’t change often.

I would suggest looking at some of the paid technologies/platforms instead of taking a pure open-source approach. I like Snyk as it integrates well with the CI/CD approach and many CI servers, thus covering my main focus areas. It also provides excellent reports with good guidance and allows you to monitor your codebase to find new vulnerabilities in between pipeline executions. Finally, I really like the way Snyk consolidates the findings into a single dashboard to allow for a better understanding of your overall security status.

Published:

Updated: