Refactor pipelines to make transitions between tools

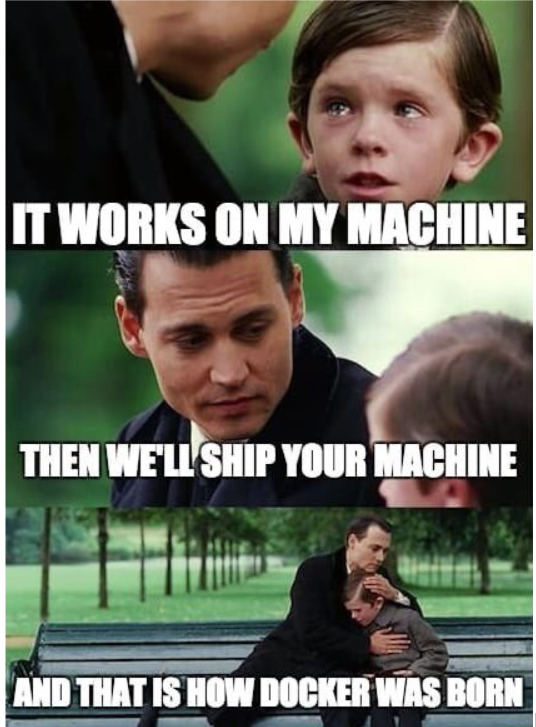

These days, modern CI/CD tools (GitHub, GitLab, etc.) are all based on pipelines running on a plethora of Docker containers. While this allows us to modularize and share between teams to keep our pipelines clean today, the main purpose of keeping your pipelines working this way is to solve the old “works on my machine” issue.

More often than not, as the project (and pipelines that build and test them) grows, we’ve seen teams needing to concentrate more effort on maintaining them. The result is that CI/CD is the only thing to build and test the software as it works on only one machine.

So, if your CI/CD tooling goes down, it can be catastrophic for your team, as without your tools, all development comes to a grinding halt. But even if this scenario never happens, it’s difficult (maybe even impossible) to migrate to newer, more advanced tooling. And do we really need to justify that you always want better tools?

In this blog, I’ve written some tips on how to avoid the “works on my machine” problem and enable you to leverage emerging CI/CD tools in the future.

Containerize each step

Long story short, containerization as a concept forces you to think about the input arguments and the desired output. Thinking in containerized steps when working in CI/CD is like what you would do with programming functions.

There are several benefits to containerizing each step like this:

- Managing versions gets easier thanks to having a history of how each step evolved and using multiple versions of the same thing in different contexts (like projects)

- Containerization simplifies the testing and debugging process as you can easily run the container on your development machine when something goes wrong in the pipeline.

- Docker, a popular containerization tool, encourages the creation of lightweight containers (i.e., steps), resulting in blazing-fast performance.

- If things are modularized well, we can also parallelize the different steps. See the Directed Acyclic Graph (DAG) for more info.

Internal logic within containers can be complicated (which I’ll address later on), but the logic that ‘touches’ outside of the container should always be kept simple–a practice you should always follow.

Use a 3rd party tool to distribute them across your organization

Having a repository of all your organization’s containerized CI/CD steps encourages discoverability and reuse, meaning your teams won’t have to put in any extra work if the steps have already been filled in. This will, in turn, improve collaboration throughout the organization.

Additionally, even if your CI/CD goes down, you can always, with a few docker pull commands, be running the same steps as you would in your CI/CD, meaning there’ll never be a moment where your work gets interrupted.

Utilize task tools to encapsulate complex logic

Bash is great, but it’s a 50-year-old programming language showing its age, and we’ve got new, more modern programming languages (which we use in our projects already) to express complex logic. And let’s be real. Everyone claims to know bash, but they don’t really (myself included).

That’s why if you need complex logic (more than just simple if-else-branching) in your build pipelines, these tools allow you to write that logic - using the programming language of your project that you already know.

Each language has a tool assistant for tasks, with some notable mentions being:

- NPM for Node

- Invoke for Python

- Rake for Ruby

- Maven for Java

- Bazel for C

Use these task tools in your containers instead of writing bash scripts. That way, you can leverage other software development best practices (like unit testing) to keep your logic working through thick and thin–without context switching between ‘actual’ code and the CI/CD code.

Secrets and environment variables outside of CI/CD

Secrets and environment variables expand during a project’s lifetime and add to its complexity, which is why they should be fit into two separate categories:

Build-time: These are needed during the CI/CD process to package the project into usable artifacts. You should always look to minimize these, but you’ll never truly be rid of them.

Run-time: These are needed at the application start-up (or when the application is running). They are plentiful and something people always put into CI/CD, but they would serve better if they weren’t there in the first place. For example, people often bake in the credentials and URLs for connecting to the database in CI/CD. But what if you need to change them when the software is live? If this is in your CICD, you need to make a new release, which is inconvenient compared to editing it in a third-party system like HashiCorp Vault. Removing them from the equation reduces the impact of the CI/CD going down.

Closing thoughts

If you follow through with each of the steps I’ve listed above, you’ll set yourself on the path to creating a faster CI/CD–making everything a breeze. Of course, that’s all easier said than done. But at least these steps weren’t too scary of a proposition!

Published:

Updated: