(Acceptance) Test-Driven Development: An Introduction

Test-Driven Development (TDD) is familiar to most developers. Acceptance Test-Driven Development (ATDD) sits more on the business requirements side of the process and may not be as familiar. Both techniques allow for shorter development cycles. This practical walk-through shows you why and how.

As someone who’s been in the DevOps business for a while, the transformation journeys I’ve witnessed are what make this job worth it for me.

I’ve seen how moving to more collaborative ways of working and leveraging cross-disciplinary practices go hand in hand: letting developers expand their skill sets to be more comprehensive. Ultimately, this fosters better understanding between narrow disciplines so the end goal – a happy customer – can be achieved better and faster.

Test-Driven Development (TDD) is a bread and butter technique used by most developers, while Acceptance Test-Driven Development (ATDD) sits more on the business requirements side of the process and hence may not be as familiar to developers. However, both techniques allow for shorter development cycles, bringing the needs of the customer to the forefront of the project’s work.

Also, both TDD and ATDD, although widely adopted in the industry, are not used to their full potential. Here I’ll teach you how to unlock any missing possibilities.

Back to basics: Shifting left

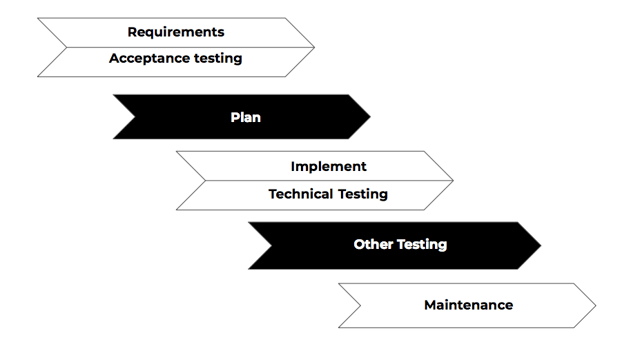

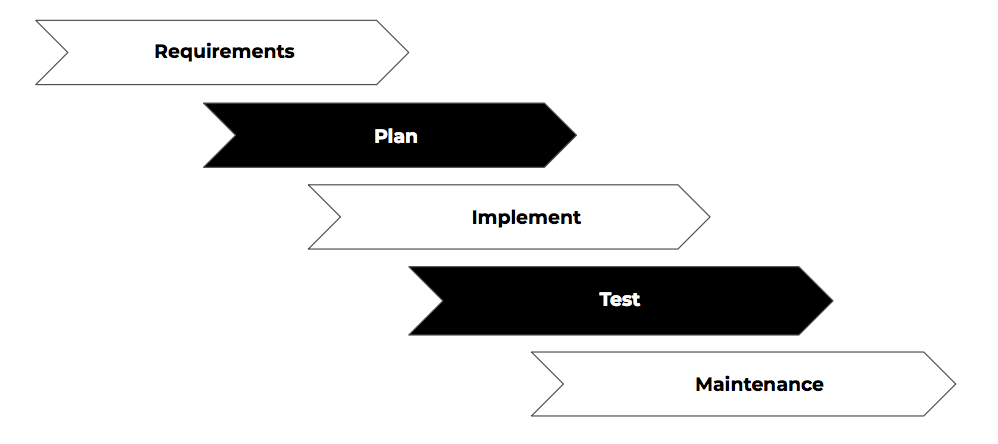

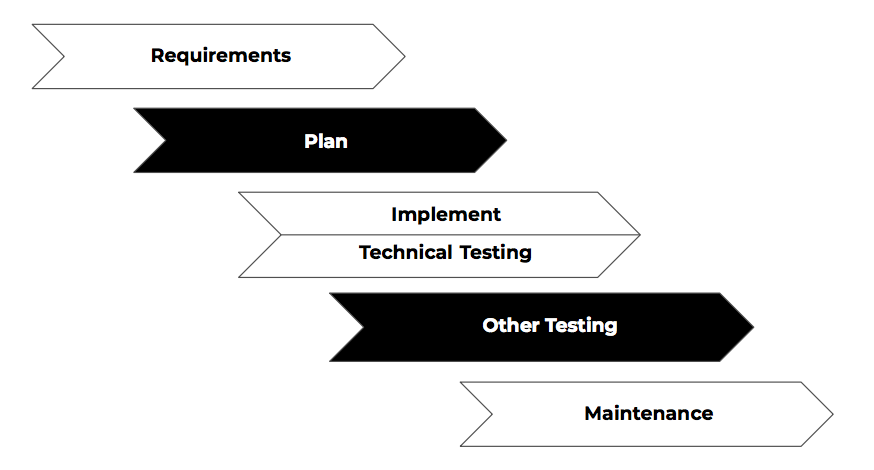

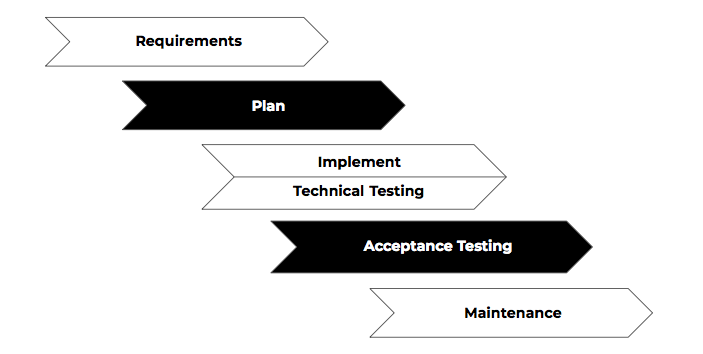

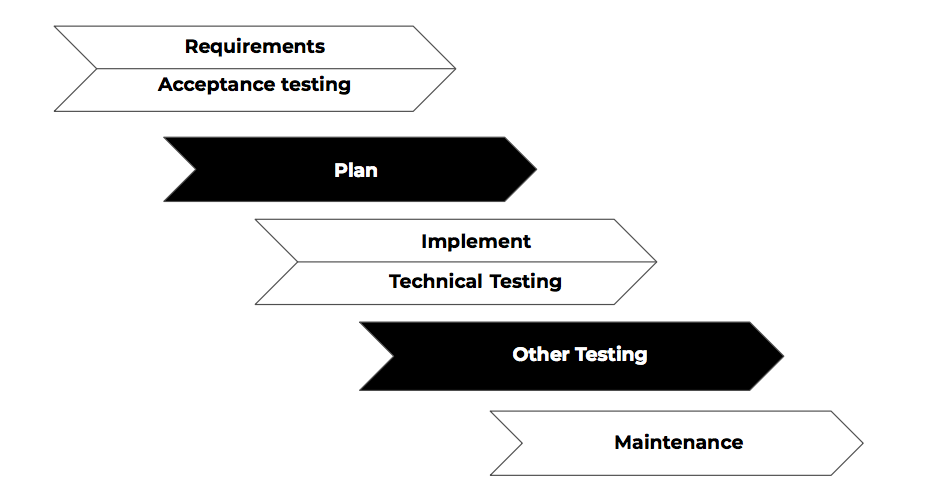

Any software goes through the following stages during its lifespan:

First, someone gets an idea that solves a problem or enables you to do something previously beyond your reach. This sets certain requirements, after which they are then planned – both technically and financially – to be implemented. This implementation is then carried out. Next, the quality of the solution needs to be assessed. We need to verify that the software works technically as intended, as well as validate that it fulfills its specification. Finally, software needs to be maintained for however long until the software is taken out of use.

Don’t forget: these phases are immutable. That is to say, software development can’t bypass any of the phases, each must be addressed at some point. Lack of requirements or foregone maintenance just means you’ve addressed the phase very poorly – but nevertheless you’ve addressed it.

The efforts of Agile and DevOps is not to circumvent work, but rather parallelise work by employing smart work practices and leveraging automation – this is called shifting left.

Here, when first requirement is devised, we immediately move through to plan, implement, test, and release it to our end users, while other requirements are created, planned, implemented, tested and released in parallel. We want to start each phase for a feature as early as possible. In other words, Agile and DevOps aim to shift as much work to the left as possible.

Test-Driven Development (TDD)

One way to “shift left” is to practice Test-Driven Development (TDD). Originally “discovered” in early 2000s, it has since grown to be a staple in a good developer’s toolbox. The biggest benefit of this practice is that it moves part of the testing work – the technical unit testing – to be in parallel with the implementation itself. This is great, as code and tests that verify that code are completed hand-in-hand, which forces developers to hold themselves to higher standards.

Shifting left not only made it possible to parallelise work, it also created a completely new way of using testing as a design tool for the code being implemented. This results in better quality code to begin with, in addition to shifting work left.

Let’s take a small example, written in Python, of how we did things back in the day before TDD.

### Thingamabob.py

class Thingamabob(object):

def __init__(self, username, password):

self.username = username

self.password = password

self.connection = None

def connect(self):

self.connection = Con(self.username, self.password)

def disconnect(self):

self.connection.disconnect()

def do_stuff(self):

return self.connection.do_stuff()

We need a Thingamabob that takes authenticated connections over network to do stuff with it. Based on this simple spec, we might create something like the above.

### test_my_module.py

def test_do_stuff(self):

thing = Thingamabob('demo', 'mode')

thing.connect()

self.assertEquals(thing.do_stuff(), 'OK')

thing.disconnect()

And, of course, we also create the accompanying unit test for it.

Great, right? So, with TDD we can make sure that we never “forget” to create the test case as well. But let’s see what happens when we start by writing just the test case.

I need a Thingamabob, to do stuff.

### test_my_module.py

def test_do_stuff(self):

thing = Thingamabob('demo', 'mode')

self.assertEquals(thing.do_stuff(), 'OK')

There! Next, what can I implement to make the test pass...

It seems I need to structure my code so the connection is done seamlessly under the hood. I know, I’ll use Python’s context managers to achieve this!

### Thingamabob.py

class Thingamabob(object):

def __init__(self, username, password):

self.username = username

self.password = password

@contextmanager

def make_connection(self):

connection = Con(self.username, self.password)

yield connection

connection.disconnect()

def do_stuff(self):

with self.make_connection() as connection:

return connection.do_stuff()

In that example, we created a much nicer interface for Thingamabob where the end user – the calling code – does not need to worry about managing the connection. In addition to consisting of less lines to implement, the latter implementation is more future-proof, since if the connection changes somehow, we do not break the test case, as its concern should only be verifying “doing stuff”. The first example may seem simpler, but it isn’t. The test case guided our design decisions to better code.

Acceptance Test-Driven Development (ATDD)

TDD is a principle that uplifted the implementation of code by not just pairing technical testing with implementation – thereby enabling parallelisation – but also revealing a completely new, unexpected way of working.

Acceptance testing goes by many names including User Acceptance Testing (UAT), end-to-end testing, Alpha testing, and Beta testing. Today, new test automation tools have enabled us to automate this area of testing, one that previously could only begin when most if not all implementation work was finished. With open source tools like Robot Framework or Cucumber, we can automate the end user interaction in our application instead of doing it manually.

One might wonder why a practice heavily concerned with the automation of manual testing is presented alongside to the comparatively technical practice of TDD. Let me assure you, it’s not just because they sound the same. ATDD also enables us to ”shift left” work that was previously happened later on.

Automation of this last stage of testing removes hand-operated work from humans, one of the key pillars of DevOps. Just like with TDD, we can shift left even more by employing Acceptance Test-Driven Development (ATDD), a practice where we start writing test cases way earlier than ever before: while gathering requirements.

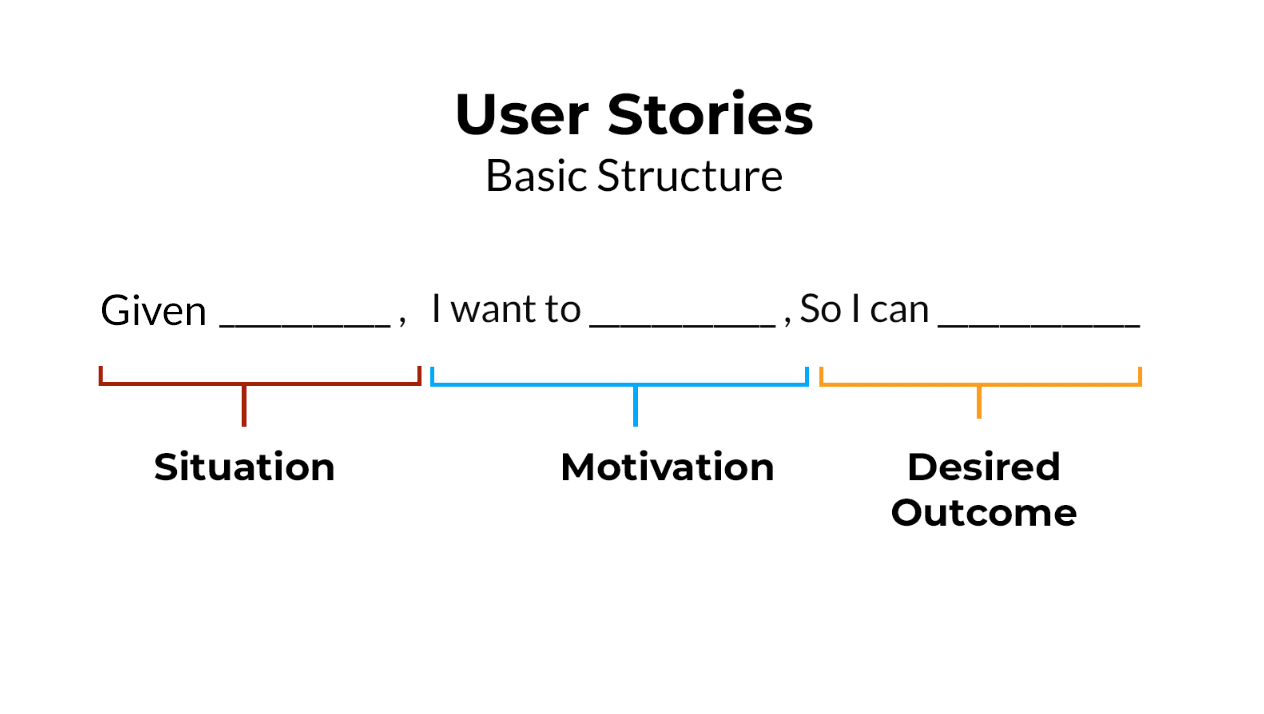

At its core, ATDD leverages another well-worn practice: user stories, to define our specifications with an informal, natural-language description from the perspective of our imagined end user.

Back in the day, software development would have looked something like this:

- Write the specification of a requirement in a document

- Sink time into understanding the document, and after much discussion plan implementation around a vague idea of what is wanted

- ...implement the feature as code...

- Sink yet more time into understanding if the implementation works as intended and if it fulfills the original requirement…

- (Usually) go back to implementing the feature in a different way now that you have more information

- New information materializes: repeat steps 3 - 5 again, and again.

Now an Acceptance test-driven development (ATDD) workflow looks like this:

- Write specification in unified format as test cases until feature is understood by everyone.

- Implement the feature while automating the test case.

- If new information that warrants a change emerges, repeat step 1 and continue.

- Verify the result by running the test case. Done.

### my_web_store.robot

**** Test cases ***

Web store works

Given user logs in with username 'demo' and password 'mode'

When user searches 'vacuum tubes'

And adds 'vacuum tubes' to the cart

Then user is able to complete the payment

Web store has special offers

Given there is special offer on 'drum sequencers'

And user logs in with username 'demo' and password 'mode'

When user searches 'vacuum tubes'

Then user is able to see the special offer

And add 'drum sequencers' to cart

And user is able to complete the payment

Example done using Robot Framework

Not only do we reap the rewards of using the user story format, allowing us to understand the problem better, we also focus the discussion around the feature from an end user perspective which in turn forces all participants – business, design, developers, testers, operations – to look at the bigger picture and why they’re doing the work in the first place.

Once everyone’s understood what’s at stake, the test case becomes a trackable entity through every other phase of the life cycle. This means that it is versioned with the code in the version control system, acting as a living documentation of the business requirement, and can always be executed, thus proving that the feature works.

TDD + ATDD

I’ve shown you how technical unit testing through TDD elevated test cases to the role of a code design tool. Likewise, ATDD makes automating the largely manual testing phase not only possible, but boosts it to the core of requirements specification.

So, are you designing simpler code with TDD? Have you found it easier to co-operate with business and development thanks to ATDD? Let us know your thoughts or suggestions for future topics for me to write about on our contact page.

Discover the training suite.

Published:

Updated: