How to simplify Atlassian Server with Docker

As an Atlassian Platinum partner we've encountered just about every scenario you can imagine, including difficult situations with self managed/self hosted server setups. In this blog we’ll describe one of these situations and show how you can overcome it by leveraging containers.

Occasionally, we are asked to help upgrade an instance of an Atlassian application and these instances are often years old.

Typical for this use case is that the people who installed the instance are no longer available. There’s no knowledge of how it was installed and configured, so no one knows how to upgrade it.

Quite often everything has been done manually with lots of different roles involved in the setup. There might have been an infrastructure team for hardware, a database team for a database, an application team for a proxy, etc. It can seem overwhelming and the upgrade can be time-consuming and painful. Something that should be fairly painless can take hours or even days.

The consequences that can result from this type of situation are pretty easy to anticipate. There are possible security issues, painful upgrade processes, an inability to leverage new features and improvements. Anxiety too. What about the audit on the horizon? And then there’s the added cost of bringing in experts like Eficode.

As a business, we do very well from helping customers in these types of situations. But, we’re also a DevOps company, we cringe at these types of scenarios and we really don’t enjoy painful upgrades anymore than you do.

Solution

The goal is to leverage technology to simplify installation, upgrades, and migrations.

- Installation should be simple and require little knowledge.

- Upgrades should be easy and take so little time that they’re done regularly.

- Migration to a new host should be relatively painless.

- As few people as possible should be involved to reduce coordination overhead.

- It should be reproducible and resistant to knowledge loss.

Technology choices

I have chosen to use Docker and docker-compose to deploy and manage the application instance. They provide the ability to manage the application as code, package direct dependencies together, orchestrate the deployment of the different pieces together, and make it all reproducible.

Atlassian’s Bitbucket server is the Atlassian application for this demo.

Atlassian's Bitbucket Docker image will be used. We want to make managing and maintaining the instance as painless as possible, and building our own images doesn't make much sense.

The official PostgreSQL image will be used for the database. PostgreSQL is free, officially supported by Atlassian, and performs really well. To the best of my knowledge Atlassian itself uses this database, so it’s a really good choice.

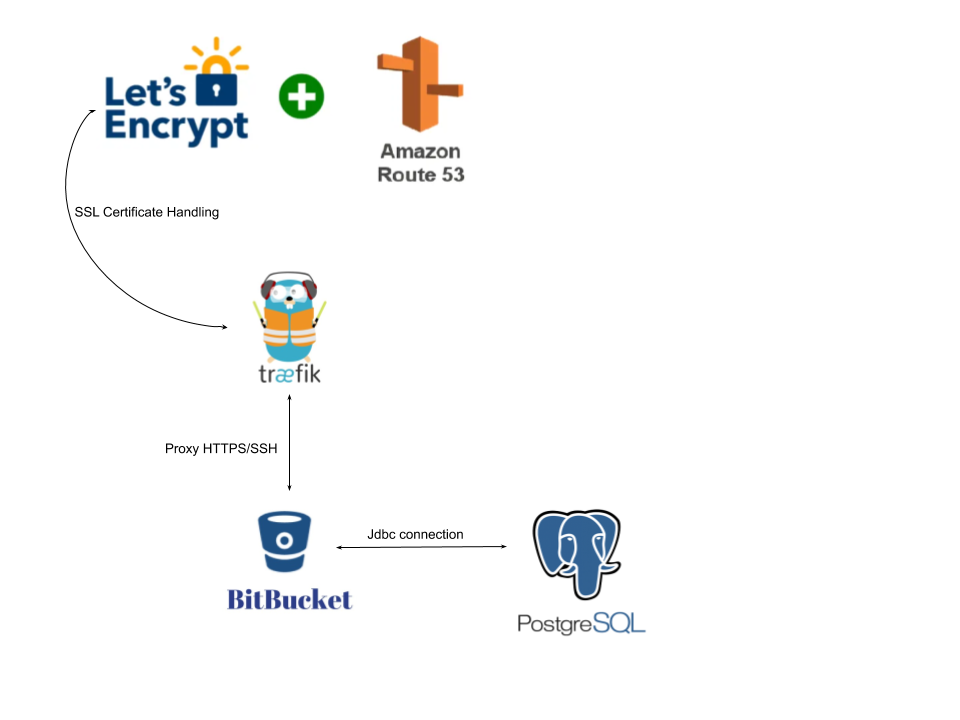

Finally, I will be using Traefik as a proxy. It is free, super easy, performs well, and supports HTTP/HTTPS along with TCP. We need a proxy that supports TCP for SSH functionality. Additionally, it integrates pretty much out of the box with LetsEncrypt and the major DNS providers which provide ACME protocol support.

Architecture & infrastructure

All of the major pieces should be deployed on one virtual machine (VM) and orchestrated by docker-compose. Backups can be done with nightly snapshots of the VM or scripted. You could also have CI server execute backup scripts and store the backups remotely. There are lots of possibilities.

The reasoning behind having all the pieces on a single VM is straightforward. The idea here is simplicity. One small repository managing everything needed to provide the application. One VM making deployment and backup as simple as possible.

Also, with Bitbucket, there is a tight coupling between the file system and the database. For example, metadata referencing hashes which are kept in the file system are stored in the database. So, a proper backup requires both the database and application to be backed up together.

Architecturally, there are only three necessary pieces. Traefik as a proxy, Bitbucket, and the PostgreSQL database.

I have also created a domain on AWS so I can configure Traefik to manage the SSL certificates for me through route53. Traefik can make SSL certificate management really easy.

The repository

How difficult is this? Well, not very. You can see a demo repository here.

There are only four files of importance.

- .env file for setting some environment variables. 39 lines.

- backup-restore.sh script. 153 lines.

- Backup-restore-compose.yml. 30 lines.

- docker-compose.yml. 88 lines.

Any real complexity is in the backup-restore.sh script. The rest is just YAML and variable assignments.

In the demo repository we are using Amazon’s Route53 with LetsEncrypt to manage our SSL certificates, so we need an AWS Access key, the corresponding secret, and associated email address. You could mount your own certificates into the Traefik container, but it’s nice to not have to worry about certificate management.

I have also registered the domain thedukedk.net on Route53.

In the following sections I have provided some screencasts to demonstrate how to use this solution.

Installation

All you really have to do is fill out the variables in the .env file. If you don’t have a DNS entry pointing to the host already, add the SERVER_PROXY_NAME to your hosts file.

Next, bring everything up and execute docker-compose up -d.

The screen cast shows the whole process.

You can see in the screen cast that everything took only a few minutes and required one command.

- Traefik dashboard can be reached on localhost:8080.

- Traefik handles HTTPS on port 443 and routes web traffic to the bitbucket-web service.

- Traefik handles SSH on port 7999 and routes SSH traffic to the bitbucket-ssh service.

- Traefik works with LetsEncrypt and Route53 to generate the SSL certificate for HTTPS.

- You can use the postgres container name postgres-bitbucket when connecting Bitbucket to the database.

To bring the whole setup down execute docker-compose stop.

You can also execute docker-compose down to stop and delete the containers. All the required data is kept in docker volumes so no data will be lost if the containers are deleted.

|

VOLUME NAME |

CONTENTS |

|

bitbucket_data |

Bitbucket’s data(home) directory. |

|

bitbucket_db |

Postgres database files. |

|

bitbucket_letsencrypt |

The certificate and key data. Stored in the file acme.json. |

Upgrading

The most complex upgrade scenario would involve upgrading the database and the application, so let's look at that process.

In the screencast you can see:

- The Bitbucket version was 7.0.2 and the PostgreSQL version was 9.5.

- I brought down the containers.

- I created a backup with the backup-restore.sh script.

- I deleted the postgres docker volume and upgraded the postgres version in the two compose files.

- I started the postgres container with the new version so it could initialize.

- I stopped the postgres container and restored the data from the SQL backup file.

- I upgraded the Bitbucket version in the docker compose file and brought the containers up again.

You can see in the screen cast the whole process took around 5 minutes. A good portion of that was startup time for Bitbucket.

The time will obviously vary depending on the size of Bitbucket’s data directory for backup. But even an instance in the 10 GB file system range will only take a few minutes to backup. The database backup and restore will be a bit faster.

Migrate host

If a situation arises where you need to migrate the setup to a new host, all you really need to do is spin up a non configured setup and then restore the data directory and database from a backup. The process is as follows.

In the screencast you can see:

- A fresh instance of Bitbucket, Traefik, and PostgreSQL was spun up.

- Bitbucket was not configured nor connected to PostgreSQL.

- The backup-restore.sh script was executed with a compressed tarball of the data directory and an sql dump of the database from the other host.

- The backup-restore.sh script restarted the containers after the restore process and everything is configured and connected.

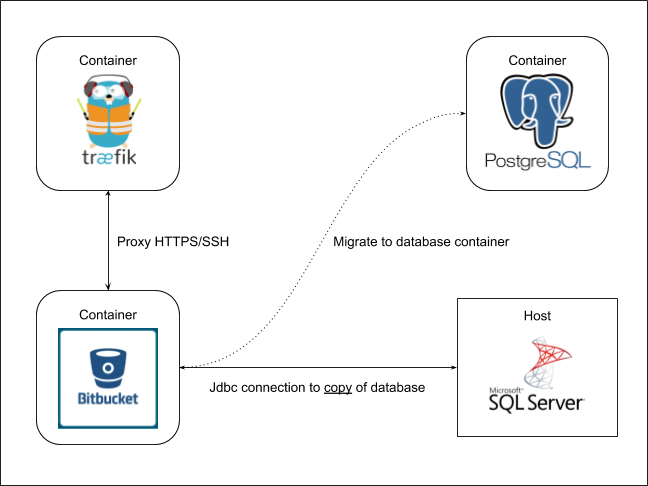

Migration to Docker

If you would like to switch to a solution like this it is not as hard as you might think. Let us suppose that you have a setup where the server is installed manually on a machine and the database is on a remote machine with a different type of database, like MSSQL as an example.

What you want to do is get the application restored in a container but connected to a copy of the MSSQL database. You then use the built-in database migration feature to migrate to the containerized PostgreSQL database.

To make sure the containerized instance will connect to the copy of the existing database you need to edit the bitbucket.properties file in the backup tarball so that it points to the database copy.

jdbc.url=jdbc:sqlserver://<MSSQL SERVER>:<PORT>;databaseName=<NAME OF DATABASE COPY>;

The process is as follows.

In the screen cast you can see:

- We bring up a fresh instance of all containers.

- We restore a compressed tarball where the bitbucket.properties file has been edited to point at a copy of the MSSQL server and database.

- We bring up all the containers again and verify that repositories are restored.

- We use Bitbucket’s database migration functionality to migrate from the MSSQL server to the PostgreSQL container.

Summary

The management of Atlassian applications does not have to be painful.

If you’re just getting started with Atlassian applications then you should really consider all the available options. My colleague did a blog post about considerations when getting started with Atlassian applications and it’s well worth a read.

If you happen to be in the situation where upgrades or migration scenarios are painful, take the time to change now. If you don’t then you’ll likely end up with an even more problematic situation down the road.

Finally, a little bit about us and what we do with Atlassian applications.

- We provide general usage and configuration support with Atlassian’s cloud and server offerings.

- We are also an Atlassian training partner and provide hands on training for our customers.

- For customers who can’t use Atlassian’s cloud offering, but do not want to host and manage the application(s) themselves, we provide a fully managed option as part of Eficode ROOT DevOps platform.

- We help customers install and maintain on premise Atlassian server and data center solutions.

Published:

Updated: