Outgrowing Terraform and adopting control planes

This blogpost will describe why teams are outgrowing Infrastructure-as-Code tools like Terraform and scaling their cloud adoption through control planes line Crossplane

In his Keynote at Crossplane Community Day, Bassam Tabbara, Founder & CEO of Upbound, intelligently described how the superpowers behind Kubernetes are:

- the ‘declarative management by desired-state’

- ‘automated controller logic’ to reconcile desired state with actual state

This operating model is part of the reason why we can easily manage hundreds of compute nodes and thousands of containers, manage networking between them, autoscaling etc.

Another reason is that the declarative API works at a useful abstraction level that does not result in cognitive overload. For example, a Kubernetes Deployment allows us to define a container image, how many replicas should be running, and then Kubernetes controllers take over to do the scheduling, monitoring, restarts and so on.

The book ‘Cloud Native Infrastructure’ by Justin Garrison and Kris Nova describes ‘Cloud Native Infrastructure’ as ‘...infrastructure that is hidden behind useful abstractions and, controlled by APIs, managed by software…’. The book goes on to describe the reconciler pattern - the very essence of this new paradigm called ‘Control planes’ and which is poised to replace more traditional Infrastructure-as-Code tools like Terraform.

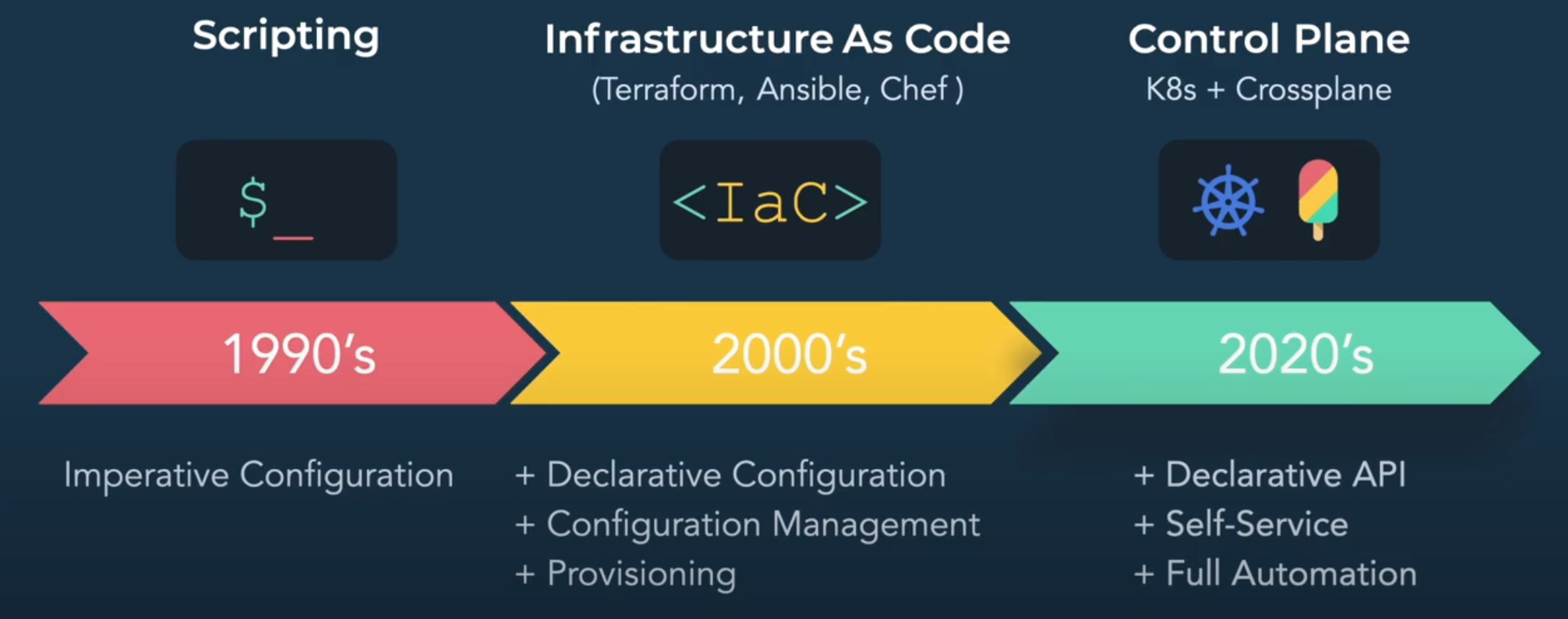

Source: Bassam Tabbara's keynote, mentioned at the top

Composability - an enabler for scaling cloud adoption

It is essential that control planes provide useful abstractions. Cloud platforms are huge and complicated, typically providing hundreds of services, each with many different configurations and settings.

This is a major limiting factor in the adoption of cloud. Platform and SRE teams can be expected to champion the cloud, but generally upskilling all developers to be able to take advantage of the cloud does not scale. When the ‘average’ developer look at the cloud, they may see this - a lot of building blocks of different size and color:

…and what they really want was just the following with the only freedom being the colour:

The generality of the cloud platform means that it is not providing the right abstraction for the larger majority of developers. The Kubernetes API provided an abstraction of compute nodes, container runtimes like Docker, networking, and storage. This made deploying and operating applications on top of a container cluster much easier.

Composability is the ability to build higher level abstractions using lower level abstractions and is key to scaling adoption of any technology - including cloud. But don’t take my word for it, see what Kelsey Hightower says about Crossplane and composability.

Outgrowing Terraform

In the realm of Terraform, composability comes in the form of modules. Using Terraform modules is a giant leap for mankind, but they are just templates that spit out all the smaller components. Managing platforms built from modules, very quickly turns into management of the smaller components - Terraform modules are a very leaky abstraction!

Managing large monolithic infrastructures is a pain and often this is remediated by splitting the infrastructure into smaller blocks, aka. as a ‘tiered architecture’ where each block is a self-contained Terraform plan and dependencies across tiers are handled through ‘remote state’.

While better than the monolithic architecture, this is still a brittle and requires careful manual orchestration of the individual tiers due to dependencies.

Terraform is by design a tool we run on-demand whenever we want to update our infrastructure. Automating Terraform is often done with e.g. Atlantis, which introduces a GitOps/ChatOps model for Terraform. However, this will not handle drift or repairs of faulty infrastructure components. We will manually have to detect such situations and re-run Terraform because this model is event-driven and not based on a control plane reconciliation loop.

When it comes to access control, the GitOps model means that everyone with access to our source Git repository can change our infrastructure. We cannot differentiate who can do what based on the abstraction which makes sense to us. We may try to design identities for running Terraform (e.g. IAM roles) with segregated permissions, but since this is based on the underlying cloud resource model it is very difficult to get right.

This goes back to Terraform modules being leaky abstractions. Instead we want access control to be defined using the abstraction layer at which we operate. In Kubernetes the RBAC model concerns itself with resources such as Deployments and Pods across namespaces and we do not care how containers are scheduled to compute nodes or network segregation implemented with IP-tables etc.

See also what Nic Cope of Upbound says about Terraform vs. Crossplane - he said it much better than I can.

Fight the reluctance to change

Using control planes means relinquishing control. Therefore seasoned SRE teams may feel a reluctance in trusting the control plane. While we still need to see how e.g. Crossplane fairs in the heat of the battle, we will eventually adopt control planes also for cloud. Not being distracted by details and instead abstracting them away and letting the ‘machines’ handle the details is a natural evolution in tech.

We have seen this all the way from operating systems, compilers and container platforms like Kubernetes. Knowing that this is the inevitable evolution, we should embrace control planes. If Crossplane does not strike the right balance and abstraction level, the next control plane will.

Changing the mental model of infrastructure

Ops and SRE teams may be concerned about ‘how they can ensure uptime and availability’ with the ‘eventual consistency’ model of control planes.

This is a valid concern and points to another common anti-pattern in infrastructure designs today. Many organizations will have e.g. ‘dev’, ‘stage’ and ‘production’ Kubernetes clusters. For example, if we change the desired state of the ‘production’ cluster: how do we guarantee our end-users do not experience our applications being down while the control plane (which we by design have very little control of) consolidates the new state? The concern is valid, but the question is wrong!

The concern here stems from the fact that our ‘production’ cluster is a pet, not a cattle.

With Kubernetes we learned to change away from individual pet servers into cattle containers, i.e. characteristics like uptime and availability was handled through Kubernetes orchestrating multiple containers. Looking forward, we need to learn to apply the same model to all infrastructure.

We should not design with a single ‘production’ cluster. Instead we should realise that we at any time may have a number of ‘production’ clusters and factor this into our design - much like we take Kubernetes Deployments and Services for granted and let the Kubernetes Control plane handle all the complexities of managing the individual containers and the overall characteristics of our application.

Published:

Updated: